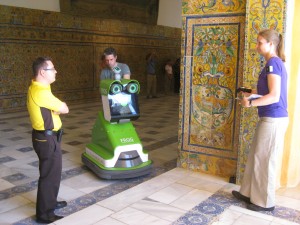

During the FROG-meeting in Seville in May 2013, we did exploratory studies to find out how naïve people react to a (tour guide) robot that is approaching them and giving a short tour. During these experiments, the robot was remotely controlled by one of the partners from Universidad de Pablo Olavide.

On Tuesday we did an experiment with a robot approaching visitors, without any further actions of the robot. This kind of research has previously done in lab-settings, but not in real-life settings as we did. The preliminary results show that people tend to react in another way than they do in lab situations. We saw in our experiment that people naturally avoided contact with the robot, by changing their path a bit, while in the lab-setting experiments the people stayed in the place they were asked to stand and let the robot approach.

On Thursday and Friday we did experiments with a guide robot that gave a short tour through the halls of Charles V. In between the sessions we made small changes in the robot behavior and spoken content. Preliminary results indicate that a robot is not given any personal space (based on the diagram of Hall); lots of people tend to stand very close to the robot. This is different from the behavior visitors show when following a human tour guide, then they usually keep a distance from 1.5-2.5 meters from the guide. Also, we found that human behavior of following the robot varies, some people stand meters away and turn a bit when the robot is driving to the next stop, while others follow the robot right behind it. When visitors are following human tour guides, they will never stand that far away, and also not follow the guide that close, but the visitors show they are a group and walk with the guide in the direction the guide indicated to go.

People tend to look where the robot is looking, however indications in the spoken text may help to indicate where people should look. This indicates that pointing the attention of the visitors by speech only is not sufficient.

All experiments are videotaped and will be analyzed on human behavior, e.g. how do people react to the robot, what formations do people form around the robot, how do people follow the robot, at what points do people leave the robot tour, how can the robot get their attention? Answers to these questions will help to form guidelines about robot behavior.